Lab Companion

Chemists spent excessive time on manual tasks and searching messy formula portfolios instead of focusing on experimentation and science.

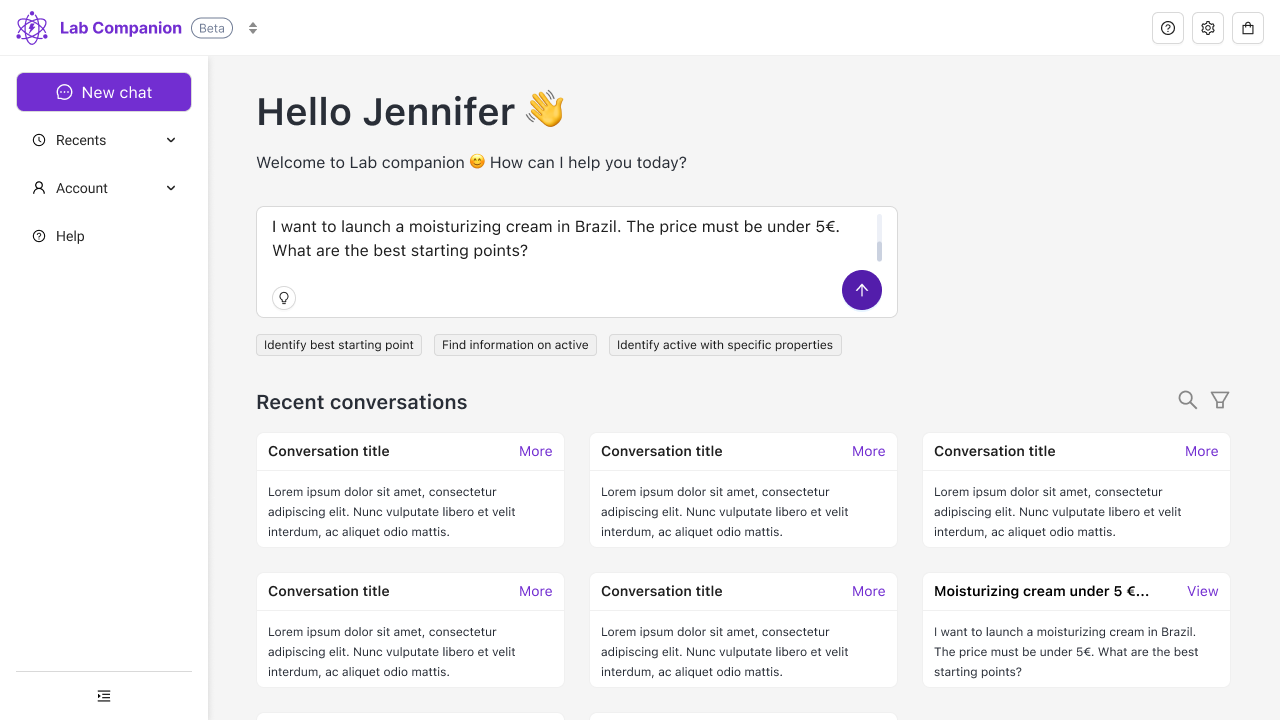

Designed a dual-mode AI interface with: - Manual parametric controls for precision - Natural language processing for exploration - Full transparency and user control

- Productivity and satisfaction improved significantly - Earned validation for global multi-division rollout - Key learning: transparency keeps users in control

I designed an AI agent for L'Oréal chemists to automate repetitive tasks like searching formula portfolios. The initial LLM chatbot approach failed because chemists needed reliability, not magic. I pivoted to a dual-mode interface combining manual parametric controls with natural language exploration. The key insight was that transparency beats opacity—chemists needed to see what parameters drove the results, not receive mysterious AI recommendations. The tool earned validation for global rollout after improving both productivity and user satisfaction.

Overview

Lab Companion is an AI agent built for L’Oréal’s chemists. The idea was straightforward: automate the repetitive, low-value tasks that eat into their time, so they can focus on what actually matters: experimentation. What happened along the way was a lesson in what it really means to design AI-assisted tools for people who don’t trust them yet.

Team: BCG X (PM, developers, data scientists) + L’Oréal (PO, tech lead, data scientist, architect)

Location: Paris, France

The Challenge

L’Oréal’s R&I laboratories operate more like craftsmanship ateliers than standardized production lines. Each chemist, each lab, has its own way of working. There are no universal processes. Data collected during work isn’t always recorded in the systems. And knowledge gets siloed: a formula developed in one lab may be quietly reinvented elsewhere without anyone knowing.

The most concrete pain point sat at the intersection of the lab and the marketing team. When a brief came in, for example: “moisturizing cream for women over 50 in Brazil”, a chemist had to manually search across a vast and often messy formula portfolio to find viable starting points. This meant contacting colleagues, digging through legacy tools, and relying heavily on personal experience and network. Junior chemists who hadn’t yet built that network were often left floundering.

The back-and-forth between lab and marketing to converge on a final formula selection was slow and inefficient. Chemists were frustrated, not because the work was hard, but because so much of their time was going to administration and search instead of actual science.

Research

With only three months to deliver a functional pilot, there was no time for a lengthy discovery phase. We ran guerrilla research: a group of five super-users, lab visits, and semi-structured interviews to understand how chemists actually worked, what tools they used, and where the friction was.

A few things stood out clearly. Chemists are experts. They rely on intuition built over years of practice, and they’re protective of that expertise. Any tool that felt like it was overriding their judgment or replacing it, would be rejected. The tool had to feel like a collaborator.

There was also visible anxiety about AI in the room. Some chemists worried, quietly or openly, about being replaced. That wasn’t a fringe concern, it was something the design had to take seriously.

First Iteration: The Pilot

I built a clickable prototype in Figma and presented it to users early. It was well received, and development started quickly. Perhaps too quickly, in hindsight.

BCG X had committed to delivering a working pilot for the skincare division within three months. The political context was significant: this was a high-visibility innovation initiative for L’Oréal, and there was pressure from multiple directions to move fast. The prototype went into development, and three months later, the pilot was live with around forty chemists.

In practice, the formula recommendations were generally relevant. Users did feel they were saving time. But cracks appeared quickly.

The underlying LLM was non-deterministic by design which is, of course, the nature of language models. For a scientific environment this was a real problem. A chemist asking the same question three times expected the same answer three times. That wasn’t what they got, the model hallucinated, outputs varied. Users had to rephrase, iterate, and second-guess.

“It must be the way I’m asking."

"I’m probably too old for this kind of thing.”

What was striking was how users internalized the blame. Instead of questioning the tool, they questioned themselves. This is a pattern that became central to my thinking: when a tool presents itself as all-knowing and opaque, users surrender their critical distance. The magic aesthetic of AI used in most of the tools (the wands, the purple stars, the sense of wizardry) doesn’t help. It actively makes things worse.

The Pivot

After BCG X rolled off the project, we took stock. Bugs, user frustration, and the structural limitations of building on a pure LLM foundation had put the project at risk.

I had raised concerns about the LLM-centric approach early in the process. My instinct as a designer was that a chatbot wasn’t necessarily the right solution to this specific problem, not because AI was wrong, but because the non-determinism of LLMs was in direct tension with what chemists needed: reliability, transparency, and a sense of control.

With the core team (PO, data science, dev) I ran a thorough audit of the pilot using real user feedback. We rebuilt the concept around a different principle: use generative AI as an enhancement, not as a foundation.

The Solution: Dual-Mode Interface

The solution was a dual-mode interface. Users could switch at any point between:

Manual Mode also called the brief editor is a structured, parametric interface where chemists could set precise criteria: performance, price, biodegradability, origin, and so on. This was deterministic, predictable, and transparent.

Automatic Mode a natural language interface powered by the LLM, for when users wanted to explore freely or translate a brief quickly.

Crucially, both modes were synchronized. A selection made in manual mode was immediately reflected in automatic mode, and vice versa. The two weren’t competing approaches, they were two views of the same underlying query.

This meant chemists could always see exactly what parameters were driving the results. They could start with a natural language prompt, then refine manually. Or build a precise filter set, then ask the AI to explain or expand on it. The opacity that had caused so much anxiety in the pilot was replaced with something legible.

Results

The results were meaningful:

- Productivity improved chemists spent less time on administrative search tasks

- User satisfaction improved the tool felt like a companion, not a replacement

- Multi-division validation the product earned enough confidence internally for rollout across L’Oréal’s global R&I ecosystem, beyond just skincare

For me personally, the most significant outcome was simpler: I managed to make my concerns heard. By running a precise audit grounded in real user feedback I was able to demonstrate why the initial approach was insufficient, and propose a direction that held up.

Key Learnings

This was my first AI agent project as a solo designer, and it came with a steep learning curve on multiple fronts.

The “Magic” Problem

The thing I keep coming back to is the “magic” problem. AI tools are often positioned as magical — the visual language, the branding, the product copy all lean into it. But magic is profoundly disempowering for users. When something is magic, you can’t understand it, you can’t predict it, and when it fails, you assume you did something wrong. That’s a terrible basis for a professional tool used in a scientific environment.

Transparency is Non-Negotiable

Transparency isn’t just a nice-to-have in AI design. It’s what keeps users in the driver’s seat. In a context where the tool is directly touching someone’s professional expertise and where some users are already anxious about being replaced the stakes are even higher.

Escalate Concerns Early

If I were to do this again, I would escalate my concerns about the LLM approach earlier and more forcefully. The political dynamics of the project made that difficult, but the cost of not doing so showed up in the pilot. That’s a lesson I’ve carried forward.

Conclusion

Lab Companion taught me that designing for AI isn’t about making the technology work, it’s about making it work for people. Chemists didn’t need a magical black box. They needed a transparent, controllable tool that respected their expertise and augmented their capabilities without replacing their judgment.

The pivot from an LLM-centric chatbot to a dual-mode interface wasn’t just a technical change, it was a fundamental shift in how we thought about the relationship between human expertise and artificial intelligence. In the end, the best AI tools are the ones that make users feel more capable, not less.